| Author | Published |

|---|---|

| Jon Marien | February 17, 2026 |

What it is

The process of finding and triggering limit overrun race conditions using Burp Repeater’s parallel request features to precisely time multiple requests and collapse the race window.

Core Idea

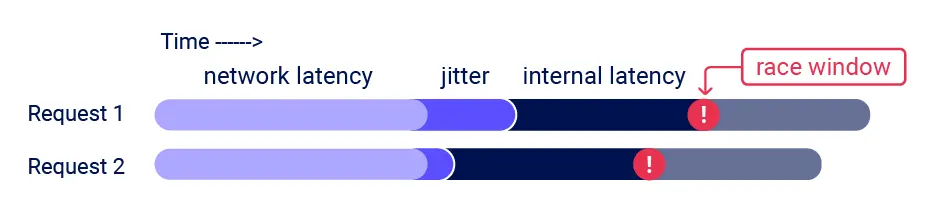

The goal is simple in theory — hit a rate-limited or single-use endpoint with multiple requests so fast that at least two requests land inside the same race window simultaneously. The hard part is the timing. Even if you fire all requests at the exact same moment, network jitter, server-side latency, and unpredictable processing order all work against you. The race window can be just milliseconds wide, sometimes less.

Burp Suite 2023.9+ addresses this directly with two techniques depending on HTTP version:

- HTTP/1 → Uses last-byte synchronization: holds back the final byte of each request and sends them all at once, forcing near-simultaneous arrival

- HTTP/2 → Uses the single-packet attack: bundles 20–30 complete requests into a single TCP packet, completely eliminating network jitter as a variable

The single-packet attack is the more powerful of the two — since all requests literally travel together in one packet, network timing differences between them become zero.

Why it’s Bad / Impact

- Makes race condition exploitation significantly more accessible and reliable

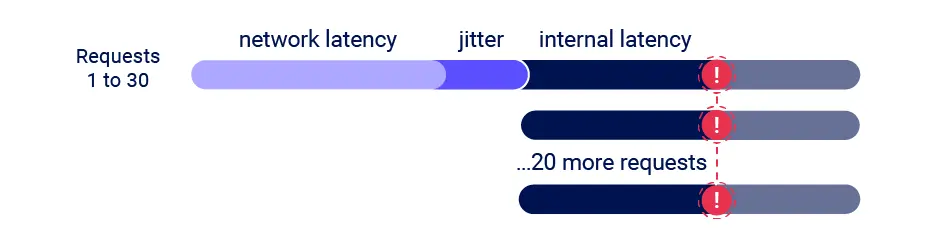

- Sending 20–30 simultaneous requests dramatically increases the chance of two hitting the race window at the same time

- Helps bypass server-side jitter (internal latency variations) through volume — more requests = more chances for a collision

- Turns what was previously a difficult, timing-sensitive manual attack into a repeatable, tooled process

How to Use It (High Level)

- Identify a single-use or rate-limited endpoint with meaningful security impact (e.g., discount codes, gift cards, login rate limits)

- Add the request to a Burp Repeater group

- Send as a parallel group — Burp handles HTTP/1 vs HTTP/2 technique selection automatically

- Send 20–30 requests at once during initial discovery to account for server-side jitter

- Observe whether the limit was bypassed — if so, the endpoint is vulnerable

Protect Against It

- Atomic operations and database locking remain the core fix (same as limit overrun defenses)

- Detect abnormal bursts of identical requests from the same session at the network/WAF level

- Implement server-side request queuing for sensitive operations so concurrent requests are serialized, not parallelized